In scientific computing, optimality of algorithms is not always something which receives full consideration by users-- if you want to run a sort or solve some combinatorial problem, you are more concerned with making the algorithm work than looking into how fast it runs. But in dealing with very large data sets, reducing the limiting behavior of your algorithm from

N^2 to NlogN can reduce your runtime from something on the order of years to something on the order of seconds. So while there are many computational problems out there for which solutions are known to exist, the practical matter of implementing such solutions is so expensive that they are effectively useless-- but if a new algorithm could be found which would reduce their runtime, we might suddenly be able to use them.

This is the driving motivation behind much of the work that goes into

quantum computing. Because bit state in a quantum computer is probabilistic rather than binary, the computer operates in a fundamentally different way, and we can design algorithms which take such differences into account. One vivid example is

Grover's Algorithm for searching an unsorted array. Here's a good description from Google labs:

Assume I hide a ball in a cabinet with a million drawers. How many drawers do you have to open to find the ball? Sometimes you may get lucky and find the ball in the first few drawers but at other times you have to inspect almost all of them. So on average it will take you 500,000 peeks to find the ball. Now a quantum computer can perform such a search looking only into 1000 drawers.

So if you were opening one drawer a second, the traditional algorithm would take you an average of six days to run, while the quantum algorithm would take you a little under 17 minutes.

(Now, say each of those million drawers represented a different combination of letters and numbers, and you were trying to find the drawer/combination which corresponded to the password to someone's email account. Encryption standards which would be secure against attacks from a traditional computer are easily bypassed by quantum algorithms.)

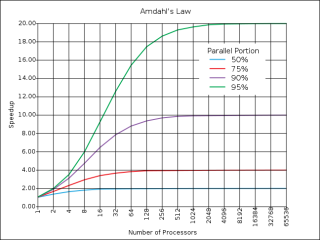

While quantum computing still has a ways to go, parallel programming is already providing another alternative to

traditional computer architecture. In parallel programming, you split your code up and send it to a number of computers running simultaneously (for our million-drawer problem: say you had 9 other people to help you, you could each search a different set of 100,000 drawers and it would only take 50,000 steps on average for the ball to be found.) So the trick in parallel programming is to figure out the right way to eliminate all the bottlenecks in your code and split up your task across processors as efficiently as possible.

Now, what about a task like image recognition? If you had a couple thousand processors at your disposal and a single image to feed them, what is the most efficient way for your processors to break up that image so that between them they can reconstruct an understanding of what it depicts? You might decide to give each computer a different small piece of the image, and tell it to describe what it sees there-- maybe by indicating the presence or absence of certain shapes within that piece. Then have another round of computers look at the output of this first batch and draw more abstract conclusions-- say computers 3, 19, and 24 all detected their target shape, so that means there's a curve shaped like such-and-such. And continue upwards with more and more tiers representing higher and higher levels of abstraction in analysis, until you reach some level which effectively "knows" what is in the picture. This is how our current understanding of the visual cortex goes-- you have cells with different receptive fields, tuned to different stimulus orientations and movements, which all process the incoming scene in parallel, and in communication with higher-level regions of the brain.

It would be interesting, then, to see what sensory-processing neuroscience and parallel programming could lend one another. Could the architecture of the visual cortex be used to guide design of a parallel architecture for image recognition? Assuming regions like the visual cortex have been evolutionarily optimized, an examination of the parallel architecture of the visual processing system could tell us a lot about how to best organize information flow in parallel computers, and how to format the information which passes between them. Or in the other direction, could design of image-recognition algorithms for massively parallel computers guide experimental analysis of the visual cortex? If we tried to solve for the optimal massively-parallel system for image processing, what computational tasks would the subunits perform, and what would their hierarchy look like-- and could we then look for these computational tasks and overarching structure in the less-understood higher regions of the visual processing stream? It's a bit of a mess because the problem of image processing isn't solved from either end, but that just means each field could benefit from and help guide the efforts of the other.

So! Brains are awesome, and Google should hire neuroscientists. Further reading:

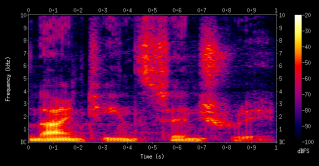

NIPS: Neural Information Processing Systems FoundationCosyne: Computational and Systems Neuroscience conferenceIntroduction to High-Performance Scientific Computing (textbook download)Message-Passing Interface Standards for Parallel MachinesGoogle on Machine Learning with Quantum AlgorithmsQuantum Adiabatic Algorithms employed by GoogleOptimal Coding of Sound